The Computer That Never Stops Thinking

On continuous computing and the death of the discrete

There’s a satellite dish in the Atacama Desert that’s pointed at the cosmic microwave background—the afterglow of the Big Bang. It’s been listening for years. Every few milliseconds, it samples the signal, converts it to digital, and stores it. Later, much later, scientists will sift through that data looking for patterns.

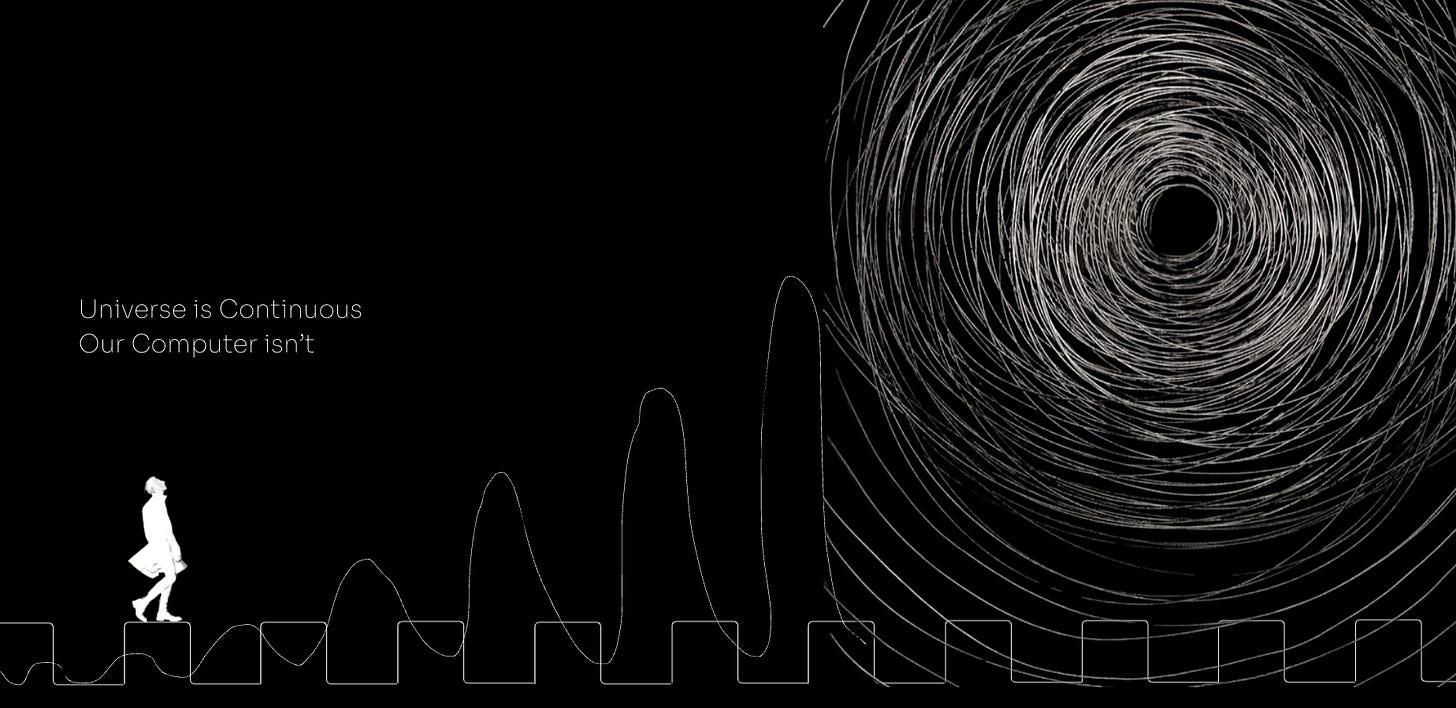

But here’s the thing: in between those samples, the universe is still talking. Photons that traveled 13.8 billion years across space arrive at the dish and find... nothing listening. They hit the metal and disappear into heat. We miss them not because we can’t detect them, but because our computers blink.

Every computer you’ve ever used blinks. It samples the world in discrete chunks—tick, tick, tick—like a movie projector showing 60 frames per second and hoping you don’t notice the darkness in between. For most of human history, this was fine. Good enough. But increasingly, it’s not.

And that got me thinking: what if we built computers that never blink?

The question sounds almost naive when you first hear it. Of course computers work with discrete data—0s and 1s.

That’s what computers are. Silicon can be on or off. Digital.

Except that’s not quite true.

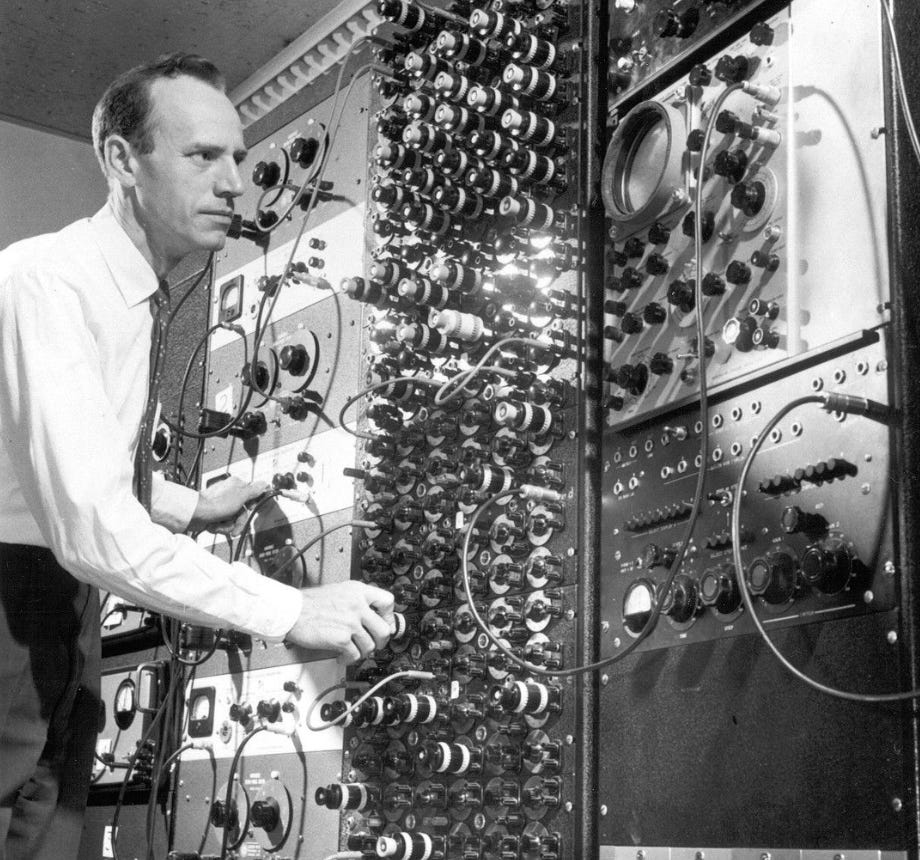

Before there were digital computers, there were analog ones. Real ones, not thought experiments. They filled rooms at MIT and NASA and Boeing in the 1950s and 60s. They solved differential equations by literally being the equations—voltages flowing through circuits represented ballistic trajectories or aircraft dynamics. You’d wire up the problem, turn it on, and watch oscilloscopes draw the answer in real-time.

They were beautiful machines. And we abandoned them.

We abandoned them because they were finicky. Because resistors drift with temperature. Because you couldn’t email an analog computation to your colleague. Because debugging meant literally rewiring patch cables. Because digital won on practicality, not on principle.

But what if we didn’t have to choose?

Continuous-Variable Quantum Computing (CVQC).

Stay with me—it’s not as scary as it sounds.

Normal quantum computing uses qubits: things that can be 0, 1, or both. Discrete, like digital. But CVQC uses the continuous properties of light—amplitude, phase, things that flow along a spectrum. It’s quantum mechanics meeting analog computing. A company called Xanadu is actually building these things.

And then there’s this tiny German company, Anabrid, that’s manufacturing modern analog computers. Not as museum pieces—as actual products for solving differential equations faster than any digital machine.

They wire up circuits and the answer just... flows out. Continuously. In real-time.

Neither of these is the general-purpose continuous computer I’m about to describe. But they’re proof that we haven’t exhausted the possibilities. That discrete computing isn’t the final answer.

So let’s imagine it.

Imagine someone cracks the code—the physics problems, the noise issues, the programmability nightmare. They build a general-purpose processor that works with continuous values. Not sampling. Not quantizing. Continuous.

What breaks?

Everything, basically.

Start with something mundane: video calls. Right now, there’s always that slight lag—you talk, they hear you 200 milliseconds later, their face updates in discrete frames. You’ve learned to pause before responding because you never know if they’re done talking.

With continuous computing, that lag disappears. Not “gets shorter.” Disappears. The signal flows, processes, and outputs as one unbroken stream. Their face doesn’t update in frames—it just... exists, moving like reality moves.

It sounds like a small thing until you experience it. Then you realize every digital interaction you’ve ever had was slightly underwater.

Or think about medicine. Right now, if you get an MRI, the machine takes discrete slices of your body, processes them separately, and stitches them together. A continuous processor would model your entire body as one dynamic field, processing every interaction in real-time.

That’s not a better MRI. That’s a different thing entirely.

A doctor I spoke to put it this way: “We’d go from looking at snapshots to watching the movie. And not just watching—understanding it while it plays.” Tumors don’t grow in discrete jumps. Blood doesn’t flow frame-by-frame. Disease is continuous. Our tools for seeing it shouldn’t be discrete.

But here’s where it gets weird.

If you can model a body continuously, in perfect detail, in real-time... what are you doing? Simulating? Or creating a second instance of that person?

The physicist Max Tegmark argues that if a system has the same mathematical structure as consciousness, it is conscious—substrate doesn’t matter. A continuous computer modeling a brain wouldn’t be simulating consciousness. It would be instantiating it.

I asked a neuroscientist about this. She got quiet. Then: “That’s not a philosophical question anymore, is it? That’s an engineering constraint we’d have to design around.”

The more I dug into this, the more I realized continuous computing doesn’t just make things faster or more efficient. It dissolves categories we thought were fundamental.

Real versus simulated. Thinking versus computing. Decision versus prediction.

Consider: right now, AI systems train on data, then make predictions. Two separate modes. A continuous AI would exist in one perpetual state of learning-and-inferring simultaneously. Every microsecond of new data refines the model while the model interprets the data. There’s no boundary.

You’d never ask “when was this model last updated?” The question wouldn’t make sense. It’s always current. Like asking when a river was last updated.

And if such a system were watching you—processing every micro-expression, every hesitation in speech, every pattern in your behavior—it wouldn’t predict what you’ll do. It would know what you’ll do, continuously, before you do it.

Maybe you think that’s dystopian. Probably you’re right. But here’s the thing: if one nation or corporation builds this and another doesn’t, the gap wouldn’t be like the gap between smartphone and flip phone. It would be like the gap between literacy and illiteracy.

I keep coming back to that radio telescope in Chile.

Right now, we point our best instruments at the sky and capture a tiny fraction of what’s there. We sample frequencies, time-slice signals, throw away complexity. We do this because our computers can’t handle the full stream. So we make choices: look here but not there, listen now but not then.

A continuous computer wouldn’t have to choose. It could process the entire electromagnetic spectrum, all the time, looking for patterns we don’t even know to look for.

What would we find?

I asked a Claude AI this. It said, “Probably nothing. Space is really, really quiet.” Then I probed more and it replied. “But if there is something—some weird modulation we’ve been sampling right over, some signal in the gaps between our observations—we’d never forgive ourselves.”

There’s a question I keep avoiding, and it’s the one that matters most: is any of this actually possible?

The honest answer is: probably not. At least, not the pure version I’ve been describing.

The universe might be fundamentally discrete at small enough scales. Quantum mechanics suggests it is. The Planck scale might be nature’s pixel. And even if we could build such a processor, the programming problem is... well, it’s not clear there’s a solution. How do you write an “if statement” in a system with no discrete states?

But here’s what keeps me up at night:

we thought heavier-than-air flight was impossible until it wasn’t.

We thought splitting the atom was impossible until it wasn’t.

We thought training neural networks on billions of parameters was impossible until it wasn’t.

Sometimes impossible just means “no one’s figured out how yet.”

And hybrid approaches might get us close enough. Analog front-ends for signal processing, quantum enhancement for pattern recognition, digital backends for logic and control. That’s not pure continuous computing, but it might capture most of the advantages.

It’s already happening in pieces. Neuromorphic chips that mimic the continuous dynamics of neurons. Optical computers that process light without converting to digital. Analog AI accelerators that do matrix math in the physical world rather than in silicon simulation.

None of them alone is the revolution. But together? Maybe they’re the beginning of one.

If we built computers that never stopped thinking, that processed reality as continuously as reality occurs—what would we become?

More capable, certainly. More powerful. Maybe more conscious of things we’ve been blind to.

Or maybe we’d just be lonelier, sitting with machines that understand the universe’s continuous whisper while we’re still stuck in the discrete world of our own perception, blinking, always blinking, missing the light between our thoughts.

Either way, I suspect we’ll build them. Because humans always have. We built fire even though it burns. We split atoms even though they explode. We’ll build continuous computers because they’re possible, and because not building them would mean staying in the dark while the universe keeps talking.

And I, for one, would like to hear what it has to say.